Table of Contents

Running Jobs with Slurm

In the following the basic concepts will be described.

Cluster

A collection of networked computers intended to provide compute capabilities.

Node

One of these computers, also called host.

Frontend

A special node provided to interact with the cluster via shell commands. gwdu101 and gwdu102 are our frontends.

Task or (Job-)Slot

Compute capacity for one process (or “thread”) at a time, usually one processor core, or CPU for short.

Job

A compute task consisting of one or several parallel processes.

Batch System

The management system distributing job processes across job slots. In our case Slurm, which is operated by shell commands on the frontends.

Serial job

A job consisting of one process using one job slot.

SMP job

A job with shared memory parallelization (often realized with OpenMP), meaning that all processes need access to the memory of the same node. Consequently an SMP job uses several job slots on the same node.

MPI job

A Job with distributed memory parallelization, realized with MPI. Can use several job slots on several nodes and needs to be started with mpirun or the Slurm substitute srun.

Partition

A label to sort jobs by general requirements and intended execution nodes. Formerly called “queue”

The sbatch Command: Submitting Jobs to the Cluster

sbatch submits information on your job to the batch system:

- What is to be done? (path to your program and required parameters)

- What are the requirements? (for example queue, process number, maximum runtime)

Slurm then matches the job’s requirements against the capabilities of available job slots. Once sufficient suitable job slots are found, the job is started. Slurm considers jobs to be started in the order of their priority.

Available Partitions

We currently have two meta partitions, corresponding to broad application profiles:

medium

This is our general purpose partition, usable for serial and SMP jobs with up to 24 tasks, but it is especially well suited for large MPI jobs. Up to 1024 cores can be used in a single MPI job, and the maximum runtime is 48 hours.

fat

This is the partition for SMP jobs, especially those requiring lots of memory. Serial jobs with very high memory requirements do also belong in this partition. Up to 24 cores and up to 512 GB are available on one host. Maximum runtime is 48 hours.

The nodes of the fat+ partitions are also present in this partition, but will only be used, if they are not needed for bigger jobs submitted to the fat+ partition.

fat+

This partition is meant for very memory intensive jobs. These partitions are for jobs that require more than 512 GB RAM on single node. Nodes of fat+ partitions have 1.5 and 2 TB RAM. You are required to have specify your memory needs on job submission to use these nodes (see resource selection).

As general advice: Try your jobs on the smaller nodes in the fat partition first and work your way up and don't be afraid to ask for help here.

gpu - A partition for nodes containing GPUs. Please refer to gpu_selection

Runtime limits (QoS)

The default maximum time limit you can request using -t / --time is 48 hours. You can use a “Quality of Service” or QoS to modify this limit on a per job basis. (You still have to specify the actual runtime for your job using -t). Noteworthy QoS are:

normal

Used by default. Unlike other QoS, this does not change/override the per-partition setting for maximum runtime.

2h

Here, the maximum runtime is decreased to two hours. In turn the queue has a higher base priority, but it also has limited job slot availability. That means that as long as only few jobs are submitted using “--qos 2h”, there will be minimal waiting times. This is intended for testing and development, not for massive production.

96h

Increases maximum runtime to 96 hours. This is available on request, but you must have good reasons why you need the increased duration. Under normal circumstances, jobs should not run any longer than 48 hours. You have to prove that you tried and exhausted other technical solutions like snapshotting, splitting the job into independent parts or using more CPUs/nodes to reduce the runtime. If none of these are feasible for your workload, you can get access to this and even longer QoS. The longer the runtime you need, the more time and effort you have to show to have invested without finding a solution.

How to submit jobs

Slurm supports different ways to submit jobs to the cluster:

Interactively or in batch mode. We generally recommend using the

batch mode. If you need to run a job interactively, you can find

information about that in the corresponding section.

Batch jobs are submitted to the cluster using the 'sbatch' command

and a jobscript or a command:

sbatch <options> [jobscript.sh | --wrap=<command>]

sbatch can take a lot of options to give more information on the specifics of your job, e.g. where to run it, how long it will take and how many nodes it needs. We will examine a few of the options in the following paragraphs. For a full list of commands, refer to the manual of the command with 'man sbatch'.

sbatch/srun options

-A all

Specifies the account 'all' for the job. This option is mandatory for users who have access to special hardware and want to use the general partitions.

-p <partition>

Specifies in which partition the job should run. Multiple partitions

can be specified in a comma separated list.

-t <time>

Maximum runtime of the job. If this time is exceeded the job is killed. Acceptable <time> formats include “minutes”, “minutes:seconds”, “hours:minutes:seconds”, “days-hours”, “days-hours:minutes” and “days-hours:minutes:seconds” (example: 1-12:00:00 will request 1 day and 12 hours).

--qos=<qos>

Submit the job using a special QOS. For example -q long to allow for jobs with more than 2 days runtime (don't forget to increase the runtime with -t as well in this case).

-o <file>

Store the job output in “file” (otherwise written to slurm-<jobid>). %J in the filename stands for the jobid.

--noinfo

Some metainformation about your job will be added to your output file. If you do not want that, you can suppress it with this flag.

--mail-type=[ALL|BEGIN|END]

--mail-user=your@mail.com

Receive mails when the jobs start, end or both. There are even more options, refer to the sbatch man-page for more information about mail types. If you have a GWDG-mail-address, you do not need to specify the mail-user.

Resource Selection

CPU Selection

-n <tasks>

The number of tasks for this job. The default is one task per node.

-c <cpus per task>

The number of cpus per tasks. The default is one cpu per task.

-c vs -n

As a rule of thumb, if you run your code on a single node, use -c. For multi-node MPI-jobs, use -n.

-N <minNodes[,maxNodes]>

Minimum and maximum number of nodes that the job should be executed on. If only one number is specified, it is used as the precise node count.

--ntasks-per-node=<ntasks>

Number of tasks per node. If -n and --ntasks-per-node is specified, this options specifies the maximum number tasks per node.

Memory Selection

By default, your available memory per node is the default memory per task times the number of tasks you have running on that node. You can get the default memory per task by looking at the DefMemPerCPU metric as reported by scontrol show partition <partition>

--mem=<size[units]>

Required memory per node. The Unit can be one of [K|M|G|T], but defaults to M. If your processes exceed this limit, they will be killed.

--mem-per-cpu=<size[units]>

Required memory per task instead of node. --mem and --mem-per-cpu are mutually exclusive.

--mem-per-gpu=<size[units]>

Required memory per gpu instead of node. --mem and --mem-per-gpu are mutually exclusive.

Example

-n 10 -N 2 --mem=5G Distributes a total of 10 tasks over 2 nodes and reserves 5G of memory on each node.

--ntasks-per-node=5 -N 2 --mem=5G Allocates 2 nodes and puts 5 tasks on each of them. Also reserves 5G of memory on each node.

-n 10 -N 2 --mem-per-cpu=1G Distributes a total of 10 tasks over 2 nodes and reserves 1G of memory for each task. So the memory per node depends on where the tasks are running.

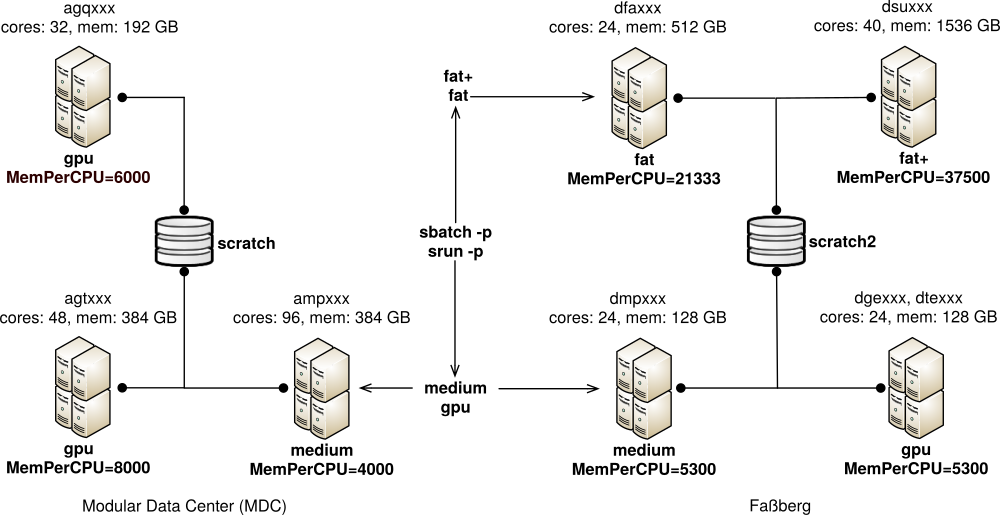

The GWDG Scientific Compute Cluster

This scheme shows the basic cluster setup at GWDG. The shared /scratch is usually the best choice for temporary data in your jobs, but /scratch is only available at the “modular data center” (mdc) resources (select it with -C scratch). The scheme also shows the queues and resources by which nodes are selected using the -p (partition) and -C (constraint) options of sbatch.

''sbatch'': Specifying node properties with ''-C''

-C scratch

The node must have access to shared /scratch.

-C local

The node must have local SSD storage at /local.

-C mdc / -C fas

The node has to be at that location. It is pretty similar to -C scratch / -C scratch2, since the nodes in the MDC have access to scratch and those at the Fassberg location have access to scratch2. This is mainly for easy compatibility with our old partition naming scheme.

-C [architecture]

request a specific CPU architecture. Available Options are: haswell, broadwell, cascadelake. See this table for the corresponding nodes.

Using Job Scripts

A job script is a shell script with a special comment section: In each line beginning with #SBATCH the following text is interpreted as a sbatch option. These options have to be at the top of the script before any other commands are executed. Here is an example:

#!/bin/bash #SBATCH -p medium #SBATCH -t 10:00 #SBATCH -o outfile-%J /bin/hostname

Job scripts are submitted by the following command:

sbatch <script name>

Exclusive jobs

An exclusive job does use all of its allocated nodes exclusively, i.e., it never shares a node with another job. This is useful if you require all of a node's memory (but not all of its CPU cores), or for SMP/MPI hybrid jobs, for example.

Do not combine --exclusive and --mem=<x>. In that case you will get all available tasks on the node, but your memory will still be limited to what you specified with --mem

To submit an exclusive job add --exclusive to your sbatch options. For example, to submit a single task job, which uses a complete fat node, you could use:

sbatch --exclusive -p fat -t 12:00:00 --wrap="./mytask"

This allocates either a complete gwda nodes with 256GB, or a complete dfa node with 512GB.

For submitting an OpenMP/MPI hybrid job with a total of 8 MPI processes, spread evenly across 2 nodes, use:

export OMP_NUM_THREADS=4 sbatch --exclusive -p medium -N 2 --ntasks-per-node=4 --wrap="mpirun ./hybrid_job"

(each MPI process creates 4 OpenMP threads in this case).

Disk Space Options

You have the following options for attributing disk space to your jobs:

/local

This is the local hard disk of the node. It is a fast, SSD based option for storing temporary data, available on dfa, dge, dmp, dsu and dte. There is automatic file deletion for the local disks. If you require your nodes to have access to fast, node-local storage, you can use the -C local contraint for Slurm.

A directory is automatically created for each job at /local/jobs/<jobID> and the path is exported as the environment variable $TMP_LOCAL.

/scratch

This is the shared scratch space, available on amp, agq and agt nodes and frontend login-mdc.hpc.gwdg.de. You can use -C scratch to make sure to get a node with access to shared /scratch. It is very fast, there is no automatic file deletion, but also no backup! We may have to delete files manually when we run out of space. You will receive a warning before this happens. To copy data there, you can use the machine transfer-mdc.hpc.gwdg.de, but have a look at Transfer Data first.

A directory is automatically created for each job at /scratch/tmp/jobs/<jobID> and the path is exported as the environment variable $TMP_SCRATCH. This is backed by a shared SSD pool and therefore extra fast.

/scratch2

This space is the same as scratch described above except it is ONLY available on the nodes dfa, dge, dmp, dsu and dte and on the frontend login-fas.hpc.gwdg.de. You can use -C scratch2 to make sure to get a node with access to that space. To copy data there, you can use the machine transfer-fas.hpc.gwdg.de, but have a look at Transfer Data first.

A directory is automatically created for each job at /scratch/tmp/jobs/<jobID> and the path is exported as the environment variable $TMP_SCRATCH.

$HOME

Your home directory is available everywhere, permanent, and comes with backup. Your attributed disk space can be increased. It is comparably slow, however. You can find more information about the $HOME file system here.

Recipe: Using ''/scratch''

This recipe shows how to run Gaussian09 using /scratch for temporary files:

#!/bin/bash #SBATCH -p fat #SBATCH -N 1 #SBATCH -n 24 #SBATCH -C scratch #SBATCH -t 1-00:00:00 #Set up gaussian environment export g09root="/usr/product/gaussian" . $g09root/g09/bsd/g09.profile #set the $TMP_SCRATCH as the scratch dir for gaussian export GAUSS_SCRDIR=$TMP_SCRATCH #run the calculations g09 myjob.com myjob.log

Please check out the software manual on how to set the directory for temporary files. Many programs have some flags for it, or read environment variables to determine the location, which you can set in your jobscript.

Using ''/scratch''

If you use scratch space only for storing temporary data, and do not need to access data stored previously, you can request /scratch:

#SBATCH -C "scratch"

You can just use /scratch/users/${USERID} for the temporary data.

Interactive session on the nodes

As stated before, sbatch is used to submit jobs to the cluster, but there is also srun command wich can be used to execute a task directly on the allocated nodes. That command is helpful to start interactive session on the node. You can use interactive session to avoid running large tests on the frontend (a good idea!) you can get an interactive session (with the bash shell) on one of the medium nodes with

srun --pty -p medium -N 1 -c 16 /bin/bash

--pty requests support for an interactive shell, and -p medium the corresponding partition. -c 16 ensures that you 16 cpus on the node. You will get a shell prompt, as soon as a suitable node becomes available. Single thread, non-interactive jobs can be run with

srun -p medium ./myexecutable

If there is a waiting time for running jobs on the medium partition, you can use the int partition for an interactive job that uses CPU resources and gpu-int for an interactive job that also uses GPU resources. The interactive partitions do not have a waiting time but you do not get any dedicated resources like CPU/GPU cores or Memory but have to share with all other users, so you should not use them for non-interactive jobs.

GPU selection

In order to use a GPU you should submit your job to the gpu partition, and request GPU count and optionally the model. CPUs of the nodes in gpu partition are evenly distributed for every GPU. So if you are requesting a single GPU on the node with 20 cores and 4 GPUs, you can get up to 5 cores reserved exclusively for you, the same is with memory. So for example, if you want 2 GPUs of model Nvidia GeForce GTX 1080 with 10 CPUs, you can submit a job script with the following flags:

#SBATCH -p gpu #SBATCH -n 10 #SBATCH -G GTX1080:2

You can also omit the model selection, here is an example of selecting 1 GPU of any available model:

#SBATCH -p gpu #SBATCH -n 10 #SBATCH -G 1

There are different options to select the number of GPUs, such as --gpus-per-node, --gpus-per-task and more. See the sbatch man page for details.

Currently we have several generations of NVidia GPUs in the cluster, namely:

V100: Nvidia Tesla V100 RTX5000: Nvidia Quadro RTX5000 GTX1080 : GeForce GTX 1080 GTX980 : GeForce GTX 980 (only in the interactive partition gpu-int)

Most GPUs are optimized for single precision (or lower) calculations. If you need double precision performance, tensor units or error correcting memory (ECC RAM), you can select the data center (Tesla) GPUs with

#SBATCH -p gpu #SBATCH -G V100:1

sinfo -p gpu --format=%N,%G

shows a list of host with GPUs, as well as their type and count.

Miscellaneous Slurm Commands

While sbatch is arguably the most important Slurm command, you may also find the following commands useful:

sinfo

Shows current status of the cluster and queues

squeue

Lists current jobs (default: all users). Useful options are: -u $USER, -p <partition>, -j <jobid>.

scontrol show job <jobid>

Full job information. Only available while job is running and short time thereafter.

squeue --start -j <jobid>

Expected start time. This is a rough estimate.

sacct -j <jobid> --format=JobID,User,UID,JobName,MaxRSS,Elapsed,Timelimit

Get job Information even after the job has finished.

Note on sacct: Depending on the parameters given sacct chooses a time window in a rather unintuitive way. This is documented in the DEFAULT TIME WINDOW section of its man page. If you unexpectedly get no results from your sacct query, try specifying the start time with, e.g. -S 2019-01-01.

The --format option knows many more fields like Partition, Start, End or State, for the full list refer to the man page.

scancel

Cancels jobs. Examples:

scancel 1235 - Send the termination Signal (SIGTERM) to job 1235

scancel --signal=KILL 1235 - Send the kill Signal (SIGKILL) to job 1235

scancel --state=PENDING --user=$USER --partition=medium-fmz - Cancel all your pending jobs in partition medium-fmz

Have a look at the respective man pages of these commands to learn more about them!